All Categories

Featured

Table of Contents

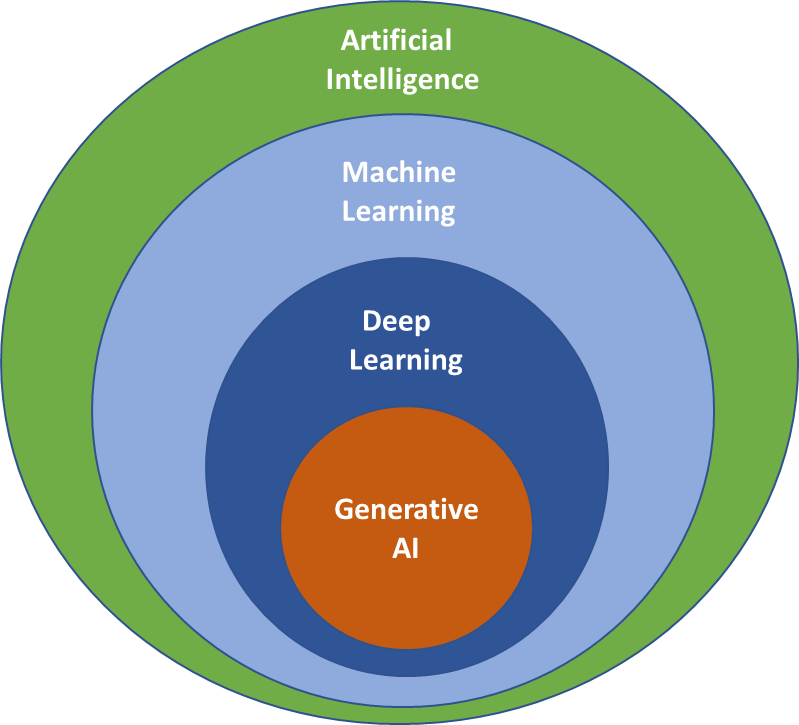

For circumstances, such designs are trained, making use of countless instances, to forecast whether a particular X-ray shows indications of a growth or if a certain borrower is likely to back-pedal a lending. Generative AI can be taken a machine-learning design that is educated to create new data, instead than making a prediction regarding a details dataset.

"When it concerns the actual machinery underlying generative AI and various other kinds of AI, the distinctions can be a bit fuzzy. Usually, the very same algorithms can be used for both," says Phillip Isola, an associate teacher of electrical design and computer technology at MIT, and a participant of the Computer Scientific Research and Expert System Lab (CSAIL).

However one big difference is that ChatGPT is much larger and more intricate, with billions of specifications. And it has actually been trained on an enormous amount of data in this situation, a lot of the openly available text online. In this huge corpus of text, words and sentences show up in series with specific dependences.

It finds out the patterns of these blocks of text and uses this understanding to suggest what may come next. While larger datasets are one catalyst that resulted in the generative AI boom, a range of major study breakthroughs also led to more complicated deep-learning architectures. In 2014, a machine-learning design understood as a generative adversarial network (GAN) was recommended by researchers at the University of Montreal.

The generator tries to mislead the discriminator, and in the procedure learns to make more reasonable outputs. The image generator StyleGAN is based upon these kinds of designs. Diffusion models were presented a year later by scientists at Stanford College and the University of The Golden State at Berkeley. By iteratively fine-tuning their result, these versions discover to generate new information examples that look like examples in a training dataset, and have been used to create realistic-looking photos.

These are just a couple of of numerous methods that can be used for generative AI. What every one of these approaches share is that they convert inputs into a set of tokens, which are numerical depictions of chunks of information. As long as your information can be exchanged this requirement, token style, after that in theory, you might apply these methods to generate brand-new information that look similar.

What Is The Significance Of Ai Explainability?

But while generative versions can achieve unbelievable results, they aren't the very best selection for all sorts of data. For tasks that involve making forecasts on structured information, like the tabular data in a spreadsheet, generative AI versions tend to be exceeded by conventional machine-learning methods, claims Devavrat Shah, the Andrew and Erna Viterbi Teacher in Electric Engineering and Computer Technology at MIT and a member of IDSS and of the Lab for Details and Choice Systems.

Formerly, humans needed to talk to devices in the language of equipments to make things occur (How is AI used in healthcare?). Now, this user interface has actually figured out how to talk to both human beings and makers," states Shah. Generative AI chatbots are now being used in telephone call centers to field questions from human clients, but this application highlights one possible red flag of executing these models employee variation

How Does Ai Understand Language?

One appealing future direction Isola sees for generative AI is its use for fabrication. As opposed to having a version make a photo of a chair, possibly it could create a prepare for a chair that can be generated. He likewise sees future usages for generative AI systems in creating more typically smart AI representatives.

We have the capability to think and dream in our heads, to find up with fascinating concepts or strategies, and I think generative AI is one of the tools that will empower agents to do that, as well," Isola claims.

Ai Consulting Services

Two added current developments that will be gone over in more information listed below have actually played a vital part in generative AI going mainstream: transformers and the innovation language designs they allowed. Transformers are a sort of artificial intelligence that made it feasible for scientists to educate ever-larger versions without having to classify every one of the data beforehand.

This is the basis for devices like Dall-E that instantly develop pictures from a message description or produce message subtitles from pictures. These advancements notwithstanding, we are still in the very early days of using generative AI to develop legible text and photorealistic stylized graphics. Early executions have actually had problems with accuracy and predisposition, as well as being vulnerable to hallucinations and spitting back strange solutions.

Going forward, this innovation might help compose code, design new medicines, create items, redesign organization procedures and transform supply chains. Generative AI starts with a timely that could be in the form of a message, a picture, a video clip, a layout, music notes, or any input that the AI system can refine.

Researchers have been developing AI and various other tools for programmatically creating content since the very early days of AI. The earliest approaches, understood as rule-based systems and later on as "experienced systems," made use of explicitly crafted regulations for generating reactions or data sets. Semantic networks, which form the basis of much of the AI and artificial intelligence applications today, flipped the trouble around.

Established in the 1950s and 1960s, the very first neural networks were limited by a lack of computational power and tiny information sets. It was not till the development of large information in the mid-2000s and improvements in hardware that semantic networks came to be sensible for generating web content. The area increased when scientists discovered a way to get neural networks to run in identical throughout the graphics refining systems (GPUs) that were being used in the computer pc gaming market to provide video games.

ChatGPT, Dall-E and Gemini (formerly Bard) are popular generative AI user interfaces. Dall-E. Educated on a large information collection of pictures and their associated message descriptions, Dall-E is an example of a multimodal AI application that recognizes connections throughout multiple media, such as vision, message and sound. In this instance, it links the significance of words to aesthetic elements.

Ai Breakthroughs

It enables customers to generate imagery in numerous styles driven by customer motivates. ChatGPT. The AI-powered chatbot that took the world by storm in November 2022 was built on OpenAI's GPT-3.5 execution.

Table of Contents

Latest Posts

How Does Deep Learning Differ From Ai?

How Is Ai Used In Healthcare?

Ai In Retail

More

Latest Posts

How Does Deep Learning Differ From Ai?

How Is Ai Used In Healthcare?

Ai In Retail